First of all, I would just like to preface this by stating that this article is based upon a previous post I had made a few weeks back in the Hip-Hop Discussion forum. A lot of good points were made in that thread by various users, whom I will credit when relevant. The thread itself was a small scale discussion, but I feel that the entire LEAKED.CX community needs to have a broader discussion regarding the issue of increasingly complex AI synthesization of speech, especially in the context of this forum/marketplace.

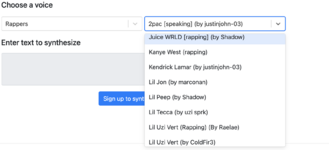

You’re probably familiar with sites such as uberduck.ai

For anybody who isn’t though, uberduck.ai is a website where many celebrity speech models have been generated, and can be interacted with in a text-to-speech manner. The models are hit or miss currently, this is of course depending on how long/well the model has been trained against examples of the vocals it is trying to replicate. These models are not exclusive to these sites however, and anybody with technical experience working with artificial intelligence could feasibly put together a model of their own. This means that in the hands of every human with access to a functioning computer and an internet connection, is a chance for new AI to be created.

The issue facing us is that the legitimacy of audio in and of itself is now being threatened. As you all know, LEAKED.CX is a website founded upon the principle of the legitimacy of sale. This site, being a well known marketplace for what is assumed to be genuine privately owned songs created by celebrity artists, is now in the crosshairs of those who may have the will and technical experience to falsify audio. Not just for fun, but for the purpose of sounding so legitimate that one could be scammed out of hundreds, if not thousands of dollars with no recourse. This is not going to be an isolated issue, and time will only make this issue worse.

The future is now

I am certainly far from the first to consider this as a possible point of failure for the entirety of the unreleased music market. You may think “the examples of AI voice / song replication are rudimentary at best” and broadly speaking, for this moment in time, it is true that the vast majority of these models are unable to deliver a quality of sound that could fool a buyer out of their money. Unfortunately however, AI trends towards progress with time. A properly maintained AI model will never evolve backwards, and in this case, the desired trait to obtain is the accuracy of the audio in comparison to real songs. This process will certainly take time to develop into a serious threat, but I can guarantee you that as you are reading this article, there are models being trained to replicate your favorite artist’s songs; if they aren’t right now, they will be soon. From the outside looking in, it may seem like a very complicated thing to get into, and one may hope that the complexity alone would be enough of a deterrent to ward off scammers, but it is not. Those who understand what they are doing on a technical level, and with a little hope for the future of the technology that is powering the model, see before their eyes a true money printing machine, and the person reading this article is the desired victim.

The depth of the issue

This of course is a thing that not only will influence the future of this website, but the music industry as a whole. There will likely be a day where a label decides to train their own model using raw stems from a deceased artist’s project files. Whether or not a public moral conversation will stop this, it will still likely happen at some point. These industry AIs would be exponentially easier to train, having complete stems, and being able to train with such accuracy could create near identical replications of an artist’s unique vocal inflections and style of speech.

You may be wondering, what makes industry AI an issue for LEAKED.CX?

(thanks to BaphometicOrder for this contribution to the discussion)

As you know, the process of obtaining unreleased music begins with a song being created in a studio with an artist, and then a myriad of methods can be used to get those files into the hands of a seller. In this context, if an industry AI creates songs that end up in the hands of a seller, and the seller is unbeknownst to the fact that they are indeed not legitimate creations, they will still sell them, and charge appropriate prices. Thus circulating what could be considered a scam song, even though nobody could ever have told the difference in the first place.(thanks to BaphometicOrder for this contribution to the discussion)

We may have to rethink what it means to be scammed.

If it is true that this is the future of the market, there will be moments like these where nobody is truly to blame, and yet one could also argue that money was lost on a file that never was truly recorded. However, technically speaking, especially in the context of stolen industry AI songs, they would still be exclusive and presumably of very high quality. As backwards as that may sound at this very moment, to consider something exclusive that never was made by the artist you really wanted, it may be a challenge we will face in the coming years, if this becomes commonplace and the markets are muddied with fake and real songs that are impossible to differentiate between. I’ll let these two quotes speak for themselves:

“is it really a scam if you receive a fully finished unheard song no one would be able to replicate except the person who made it though?”

- LEAKED.CX user Young Thug

“it is the same premise as the artist, the artist made an unheard verse that no one can replicate, and the AI did the same thing”

-LEAKED.CX user BaphometicOrder

That is a very valid argument to make. I think the discussion can reach an even deeper level though: perhaps it would make more sense, if this ever does become a problem, to create a new section of the marketplace, specifically for AI. Assuming it was user made/verified to be inauthentic through means we can discover in the future. Imagine a low price marketplace where the most famous artists in the world can be technically bought for dirt cheap, and you still are the only person with the file. In this marketplace, prices could be determined on how good the songs ended up being after generating them. Imagine being able to buy a $30 “Playboi Carti” song that nobody else will ever hear besides you and the creator, and it sounds completely real. Perhaps this marketplace could have a request section, where you could outline the parameters of the track, AKA, the era, vocal style, and much more to suit exactly what you wanted.

This might even be the deterrent we would need to keep the majority of scams out of the true marketplace. But that doesn't remedy the situation entirely. Of course scams will still be attempted, so perhaps we should preemptively come up with a new set of parameters for the song verification process, which can help identify such fakes. Whether that would be allowing them to be run through an identification application, or providing more information, I'm personally not sure.

In conclusion, we should have this in our minds going forward, or else this site, and the entire unreleased music market as a whole has the potential to implode.

Thank you for your time,

- 03 Greedo